Implementing a Real-Time Ad Spend Monitoring System to Prevent Wasted Spend

A clothing brand spending $28k/month on paid media had a 1.8x blended ROAS and no reliable way to know which campaigns were crossing the break-even line. Here's the monitoring system we built to recover $6,200/month in structural waste and bring ROAS to 4.9x.

Monthly waste recovered within 8 weeks

ROAS after rebuild, up from 1.8x

Reduction in blended customer acquisition cost

From audit to full system deployment

The Opportunity

Paid media waste doesn't announce itself. It accumulates quietly between review cycles — in ad sets that crossed the break-even threshold three weeks ago and kept running, in campaigns that were profitable on paper at the campaign level but were subsidized by one high-performing SKU carrying the rest, in branded spend that was defending searches the brand already owned organically. By the time a weekly or monthly reporting cycle surfaces the problem, the budget has already been burned.

The client, a clothing ecommerce brand doing approximately $6M in annual revenue, was spending $28,000 per month across Meta and Google. Their reported blended ROAS was 2.4x — a figure that masked significant structural variation across campaigns. At the SKU level, some product categories were generating 6x returns. Others were generating 0.9x. The aggregated number looked acceptable. The underlying capital allocation was not.

The marketing team reviewed performance weekly. In a week, a negative-ROAS ad set running at $400/day produces $2,800 in waste before anyone acts on the signal. Even high performing campaigns had ads and keywords that underperformed. In both cases, if the wasted budget was reallocated to profitable SKUs, campaigns and ads, the returns would compound. The constraint wasn't the strategy. It was the signal latency between when performance degraded and when anyone saw it.

Why Clothing Is Particularly Vulnerable to Spend Waste

Fashion and apparel paid media has structural characteristics that accelerate waste accumulation compared to most ecommerce categories:

High SKU velocity

Seasonal inventory turnover means campaign structures built for spring collections are still running against summer inventory, targeting the wrong buyer segments with the wrong creative context.

Audience fatigue cycles faster

Fashion creative saturates audiences more quickly than utility purchases. An ad set that was profitable in week one can cross the break-even line by week three as frequency builds and click-through rates compress.

Return rates distort ROAS

Clothing has return rates of 20–40% in most categories. A campaign reporting 3x ROAS before returns may be running at 1.8x after returns are factored in. Most platforms report on gross revenue, not net-of-returns contribution.

Margin varies significantly by SKU

A basic tee and an outerwear piece may have identical price points but very different contribution margins. Campaigns optimized by revenue don't know the difference and will happily spend equally against both.

Campaign proliferation

Clothing brands tend to run large numbers of simultaneous campaigns: by category, by season, by audience segment, by creative type. A 30-campaign account structure reviewed once per week produces a week of waste per underperforming campaign per review cycle.

Healthy campaign ROAS masking sub-component waste

A campaign reporting a 3.5x blended ROAS may contain individual ad sets, keywords, or placements running well below break-even. One high-performing creative or audience subsidizes the rest. Platform reporting shows the blended number — it doesn't flag the underperformers dragging it down. Without ad-set and keyword-level monitoring, low-performing components keep spending indefinitely while the campaign-level number looks acceptable.

Why campaign-level ROAS is not enough

Campaign-level ROAS is a blended average. A 4x campaign can contain a 7x ad set running one high-performing creative, a 2x ad set with adequate performance, and a 0.6x ad set burning budget against an exhausted audience. The top-line number looks healthy. Two-thirds of the spend within it may not be. Effective monitoring must operate at the ad set, keyword, and placement level — not just the campaign level — because that is where waste accumulates while the aggregate number stays green.

For this client, the combination of a large campaign structure, high SKU velocity, and weekly-only review cycles meant that structural waste had been compounding for months before the audit surfaced it. The question wasn't whether there was waste — it was whether the team had the infrastructure to catch it fast enough to act on it.

A monitoring system that reduces the lag between performance degradation and team response from seven days to one day eliminates six days of avoidable waste per underperforming campaign per week. Across a 30-campaign account, that arithmetic adds up quickly.

The Solution

We built a Real-Time Ad Spend Monitoring System configured specifically for the client's account structure, margin profile, and campaign taxonomy. The system replaces judgment-based weekly reviews with codified kill and scale criteria evaluated daily, surfaces anomalies before they become material waste events, and produces a daily performance summary the team can act on in under 15 minutes.

The system was built around four components: a margin-adjusted performance baseline, automated threshold monitoring, a daily digest with prioritized action flags, and a kill/scale decision framework the team operates without analyst support.

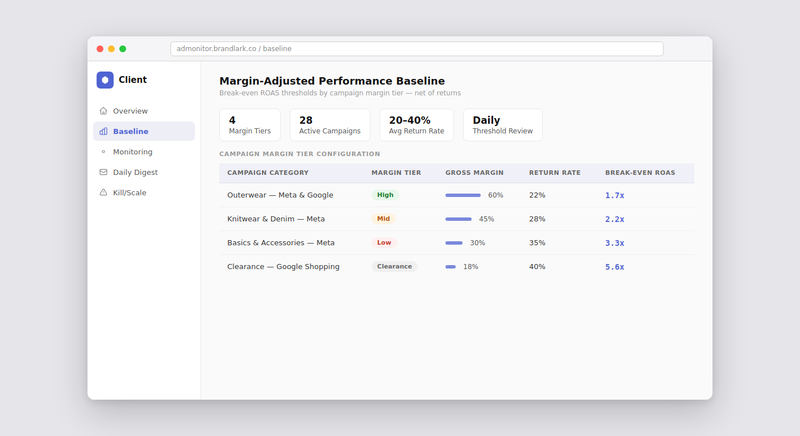

Component 1: Margin-Adjusted Performance Baseline

Before any monitoring can be meaningful, the system needs to know what "break-even" actually means at the campaign and ad set level — net of returns, net of COGS, and accounting for the margin variation across the client's SKU mix. We mapped the client's product catalog into four margin tiers: high-margin outerwear (55–65% gross margin), mid-margin knitwear and denim (40–50%), lower-margin basics and accessories (25–35%), and clearance (variable, floor of 15%). Each campaign was tagged to its primary margin tier. The break-even ROAS threshold is different for each tier: a campaign running outerwear at 2.5x ROAS is profitable; the same 2.5x ROAS on basics is not. The baseline makes that distinction explicit and enforces it automatically.

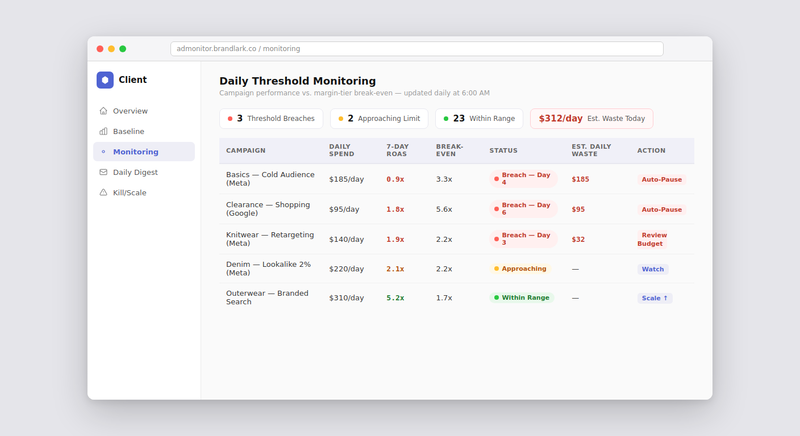

Component 2: Daily Threshold Monitoring and Anomaly Detection

The monitoring layer runs a daily snapshot at every level of the account: campaign, ad set, keyword, and placement. This matters because campaign-level ROAS is a blended number — a healthy average can hide individual components running well below break-even, covered by the top performers. When any unit crosses a configured threshold (three consecutive days below break-even ROAS, a keyword spending above the CPA ceiling with no conversions, frequency above the saturation benchmark), the system flags it with the breach detail, cumulative cost, and a recommended action. The team receives a signal it can act on, not raw data it has to interpret.

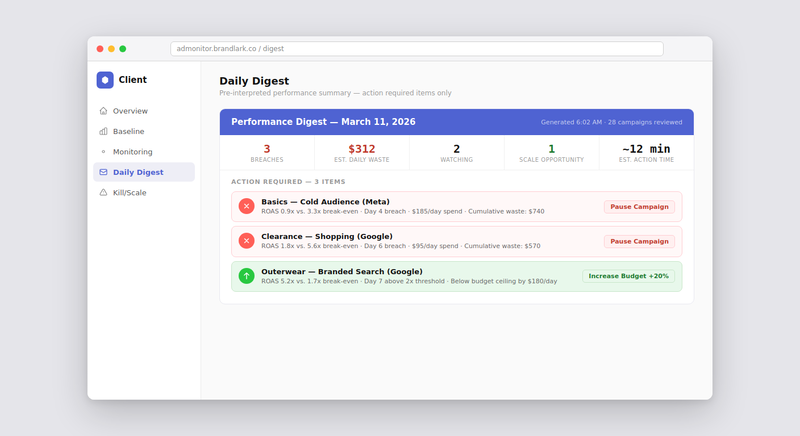

Component 3: Daily Digest with Prioritized Action Queue

Each morning, the client's marketing manager receives a structured daily digest: a one-page summary of the prior day's performance against the baseline, a list of flagged campaigns sorted by estimated daily waste impact, and a queue of pending decisions with recommended actions pre-populated from the decision framework. The digest is designed to be actioned in under 15 minutes. Campaigns without flags require no action. Flagged campaigns have a clear recommended action — pause, reduce budget, or escalate for creative refresh — with the supporting data already present. There is no analysis required before acting. The system has already done it.

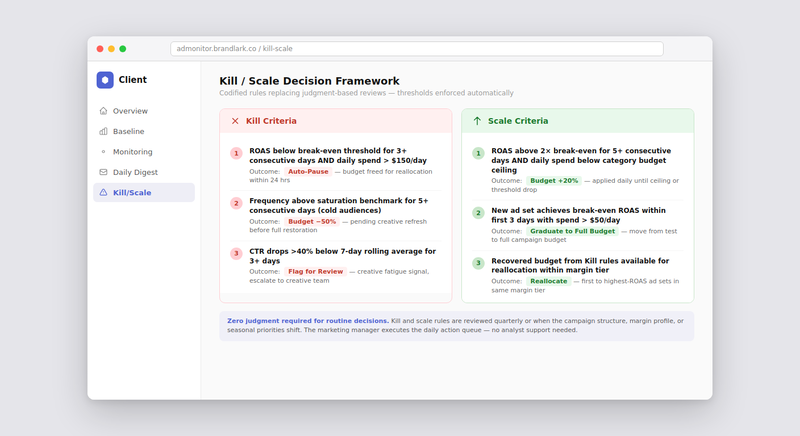

Component 4: Codified Kill and Scale Decision Framework

The decision framework converts monitoring flags into pre-approved actions, removing the judgment call from routine decisions. The client defined the thresholds: pause any ad set below break-even ROAS for three consecutive days above $150/day spend; cut budget 50% on campaigns with frequency above the saturation benchmark for five days; increase budget 20% on ad sets running above 2x break-even for five days with room to scale. With those rules codified, the team acts on a flag rather than deliberating over it.

Infrastructure the Client Owns

The monitoring system was deployed as client infrastructure, not a managed service. The marketing team operates the daily digest, adjusts thresholds as the campaign structure or margin profile changes, and adds new campaigns to the monitoring framework without external support. The decision framework is documented internally. New team members can be onboarded to it in a single session.

No dependency on platform-native alerts

Meta and Google's built-in alerting operates at the campaign level and evaluates against targets the advertiser sets — which are often misconfigured or too broad to catch ad-set-level waste. The monitoring system operates independently of platform-native tools and applies the client's actual margin economics, not platform-default optimization targets.

Margin tier configuration updates with the catalog

As the client's product mix shifts across seasons, the margin tier tags are updated to reflect the new catalog composition. The monitoring thresholds adjust automatically. A system built on generic platform targets becomes misconfigured as the catalog changes. A system built on the client's own margin data stays accurate.

Operated by the marketing team, not the analyst

Before the system, identifying underperforming campaigns required pulling platform data into a spreadsheet, calculating adjusted ROAS by margin tier, and comparing against break-even thresholds — a task that took 2–3 hours per review cycle and was only done weekly. After the system, the daily digest surfaces the same analysis in a pre-interpreted format the marketing manager acts on without analytical support.

The Impact

The audit phase identified $6,200/month in ad sets running at structurally negative ROAS — campaigns that had been below their margin-tier break-even threshold for weeks without triggering a review. Those campaigns were paused within the first week. Budget was reallocated to the highest-performing ad sets within the same categories. ROAS moved from a blended 1.8x (net of returns) to 4.9x over the following six weeks as the monitoring system caught new threshold breaches in near-real-time rather than after a weekly review cycle.

| Before | With Monitoring System | |

|---|---|---|

| Blended ROAS (net of returns) | 1.8x | 4.9x |

| Monthly wasted spend | $6,200+ | Near zero (caught within 1–3 days) |

| Customer acquisition cost | Baseline | −44% |

| Performance review cadence | Weekly (7-day lag) | Daily digest (1-day lag) |

| Time to identify underperformers | 2–3 hrs/week (manual) | Under 15 min/day (pre-interpreted) |

| Break-even threshold basis | Platform ROAS targets (gross) | Margin-tier adjusted, net of returns |

| Kill/scale decision time | 3–7 days (judgment-based) | 1–3 days (threshold-triggered) |

| Team dependency on analyst | Required for weekly review | Not required for daily operations |

| System ownership | Platform-dependent alerts | Client-owned, team-operated |

The Compounding Cost of Signal Latency

A campaign running at $300/day below break-even ROAS costs $2,100 per week in waste. At weekly review cadence, that waste is structural — it happens every week because the signal arrives too late to prevent it. Reducing the lag from seven days to one day eliminates six of those seven days of waste without changing the budget, the campaign structure, or the creative. The monitoring system doesn't improve the marketing. It removes the delay between when the marketing stops working and when the team knows about it.

The real-time monitoring system is one component of how Brandlark builds Acquisition Efficiency for ecommerce brands: the systematic elimination of structurally negative-ROAS spend and the reallocation of recovered budget to the highest-return opportunities within the existing account. For this clothing client, the $6,200/month in recovered waste was partially reinvested into the top-performing outerwear campaigns, producing incremental revenue of approximately $180,000 over the eight-week engagement period.

A monitoring system that catches performance degradation in one day rather than seven doesn't just prevent waste. It changes how the team relates to the account. Weekly reviews produce anxiety because the team knows the numbers may have deteriorated since the last look. Daily digests produce confidence because the team knows they will see a problem before it becomes material. That operational shift has downstream effects on the speed and quality of creative and budget decisions.

We use Growth Capital Efficiency (GCE) to determine how recovered budget is reallocated across channels and campaigns. For this client, GCE analysis confirmed that outerwear on Meta and branded search on Google represented the highest-return deployment of the recovered spend — a reallocation that was only possible because the monitoring system surfaced the waste in the first place.